Qt3D 2.0 The FrameGraph

Continuing our blog post series about the rewrite of Qt3D.

Introduction

For quite some time now, you’ve been hearing about Qt3D’s Framegraph. Although a brief definition of what the Framegraph is was given in the previous articles, this blog post will cover it in more detail. After reading this post, you will understand the difference between the Scenegraph and the Framegraph and see their respective uses. The more adventurous amongst you, will be able to pick up a pre-release version of Qt3D and start experimenting to see what the Framegraph can do for you.

Quite similarly to how the Scenegraph defines a scene out of a tree of Entities and Components, the Framegraph is also a tree structure but one used for a different purpose. Let’s explain what that purpose is and our motivation for this design.

Over the course of rendering a single frame, a 3D renderer will likely change state many times. The number and nature of these state changes depends upon not only which materials (shaders, mesh geometry, textures and uniform variables), but also upon which high level rendering scheme you are using. For example, using a traditional simple forward rendering scheme is very different to using a deferred rendering approach. Other features such as reflections, shadows, multiple viewports, early z-fill passes all change which states a renderer needs to set over the course of a frame.

As a comparison, the renderer responsible for drawing Qt Quick 2 scenes is hard-wired in C++ to do things like batching of primitives, rendering opaque items followed by rendering of transparent items. In the case of Qt Quick 2 that is perfectly fine as that covers all of the requirements. As you can see from some of the examples listed above, such a hard-wired renderer is not likely to be flexible enough for generic 3D scenes given the multitude of rendering methods available. To make matters worse, more rendering methods are being researched all of the time. We therefore needed an approach that is both flexible and extensible whilst being simple to use and maintain. Enter the Framegraph!

Each node in the Framegraph defines a part of the configuration the renderer will use to render the scene. The position of a node in the Framegraph tree determines when and where the subtree rooted at that node will be the active configuration in the rendering pipeline. As we will see later in this article, the renderer traverses this tree in order to build up the state needed for your rendering algorithm at each point in the frame.

Obviously if you just want to render a simple cube onscreen you may think this is overkill. However, as soon as you want to start doing slightly more complex scenes this comes in handy. We will show this by presenting a few examples and the resulting Framegraphs.

We will soon see how to construct our first simple Framegraph but before that we will be introduce the Framegraph nodes available to you. Also as with the Scenegraph tree, the QML and C++ APIs are a 1 to 1 match so you can favor the one you like best. For the sake of readability and conciseness, the QML API was chosen for this article.

FrameGraph Nodes

FrameGraph nodes specify how the renderer should sort rendering commands

| FrameGraph Node | Description |

|---|---|

| ClearBuffer | Specify which OpenGL buffers should be cleared |

| CameraSelector | Specifies which camera should be used to perform the rendering |

| LayerFilter | Specifies which entities to renderer by filtering all Entity that have a matching Layer Components |

| Viewport | Defines a rectangular viewport where the scene will be drawn |

| TechniqueFilter | Defines the annotations (criteria) to be used to select the best matching Technique in a Material |

| RenderPassFilter | Defines the annotiations (criteria) to be used to select the best matching RenderPass in a Technique |

| RenderTargetSelector | Specifies which RenderTarget (FBO + Attachment) should be used for rendering |

| SortMethod | Specifies how the renderer should sort rendering commands |

Qt3D will provide default Framegraph trees that correspond to common use cases, however if you need a renderer configuration that is out of the ordinary, you will most likely have to get your hands dirty and write your own Framegraph tree.

FrameGraph rules

In order to construct a correctly functioning Framegraph tree, you should know a few rules about how it is traversed and how to feed it to the Qt3D renderer.

Setting the Framegraph

The FrameGraph tree should be assigned to the activeFrameGraph property of a QFrameGraph component itself being a component of the root entity in the Qt3D scene. This is what makes it the active Framegraph for the renderer. Of course, since this is a QML property binding, the active Framegraph (or parts of it) can be changed on the fly at runtime. For example, if you want to use different rendering approaches for indoor vs outdoor scenes or to enable/disable some special effect.

Entity {

id: sceneRoot

components: FrameGraph {

activeFrameGraph: ... // FrameGraph tree

}

}

Note: activeFrameGraph is the default property of the FrameGraph component in QML

Entity {

id: sceneRoot

components: FrameGraph {

... // FrameGraph tree

}

}

How the Framegraph is used

- The Qt3D renderer performs a depth first traversal of the Framegraph tree. Note that, because the traversal is depth first, the order in which you define nodes is important.

- When the renderer reaches a leaf node of the Framegraph, it collects together all of the stat specified by the path from the leaf node to the root node. This defines the state used to render a section of the frame. If you are interested in the internals of Qt3D, this collection of state is called a RenderView.

- Given the configuration contained in a RenderView, the renderer collects together all of the Entities in the Scenegraph to be rendered, and from them builds a set of RenderCommands and associates them with the RenderView.

- The combination of RenderView and set of RenderCommands is passed over for submission to OpenGL.

- When this is repeated for each leaf note in the Framegraph, the frame is complete and the renderer calls our old friend, swapBuffers(), to display the frame.

If all of this sounds very complex, don’t worry, it is fairly complex but the good news is that using the Framegraph is really quite easy. At its heart, the Framegraph is a data-driven method for configuring the Qt3D renderer. Due to its data-driven nature, we can change configuration at runtime, allow non-C++ developers or designers to change the structure of a frame, and try out new rendering approaches without having to write 3000 lines of boiler plate code.

Let’s try to explain this more with the help of some examples of increasing complexity.

Let’s practice

Now that you know the rules to abide by when writing a Framegraph tree, we will go over a few examples and break them down.

A simple forward Renderer

Forward rendering is when you use OpenGL in its traditional manner and render directly to the backbuffer one object at a time shading each one as we go. This is opposed to deferred rendering (see below) where we render to an intermediate G-buffer. Here is a simple FrameGraph that can be used for forward rendering:

Viewport {

rect: Qt.rect(0.0, 0.0, 1.0, 1.0)

property alias camera: cameraSelector.camera

ClearBuffer {

buffers: ClearBuffer.ColorDepthBuffer

CameraSelector {

id: cameraSelector

}

}

}

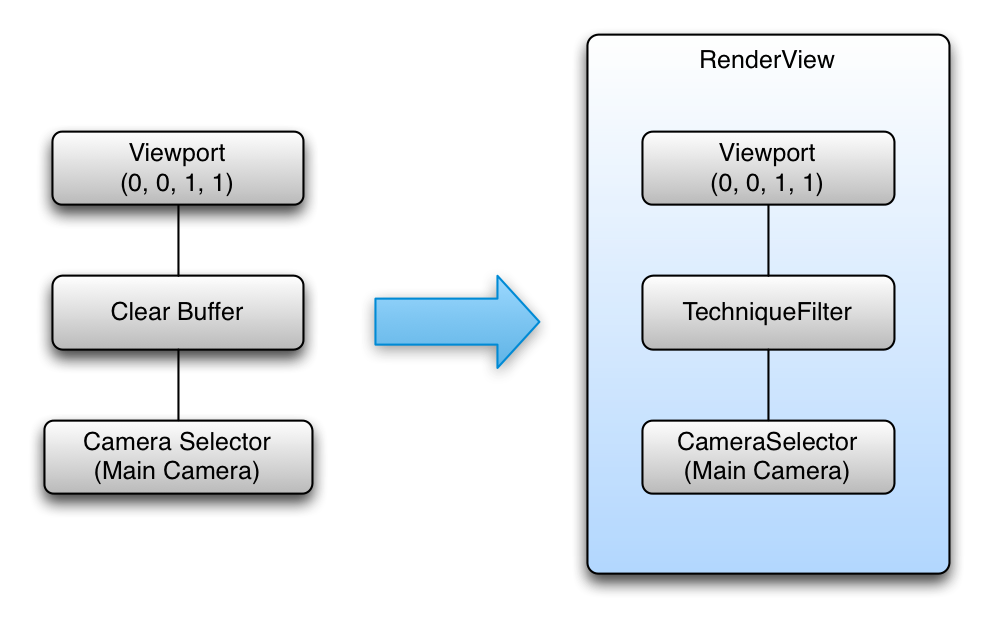

As you can see, this tree has a single leaf and is composed of 3 nodes in total as shown in the following diagram.

Using the rules defined above, this framegraph tree yields a single RenderView with the following configuration:

- Leaf Node -> RenderView

- Viewport that fills the entire screen (uses normalized coordinates to make it easy to support nested viewports)

- Color and Depth buffers are set to be cleared

- Camera specified in the exposed camera property

Several different FrameGraph trees can produce the same rendering result. As long as the state collected from leaf to root is the same the result will also be the same. It’s best to put state that remains constant longest nearer to the root of the framegraph as this will result in fewer leaf nodes, and hence, RenderViews, overall.

Viewport {

rect: Qt.rect(0.0, 0.0, 1.0, 1.0)

property alias camera: cameraSelector.camera

CameraSelector {

id: cameraSelector

ClearBuffer {

buffers: ClearBuffer.ColorDepthBuffer

}

}

}

CameraSelector {

Viewport {

rect: Qt.rect(0.0, 0.0, 1.0, 1.0)

ClearBuffer {

buffers: ClearBuffer.ColorDepthBuffer

}

}

}

A Multi Viewport FrameGraph

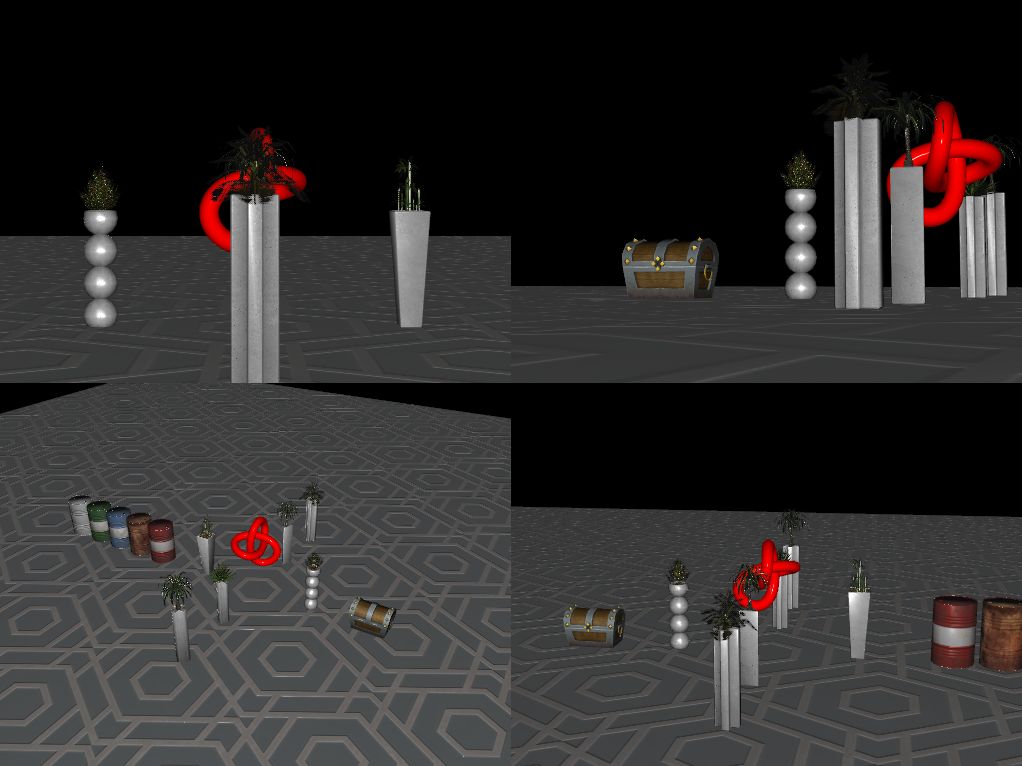

Let’s move on to a slightly more complex example that renders a Scenegraph from the point of view of 4 virtual cameras into the 4 quadrants of the window. This is a common configuration for 3D CAD/modelling tools or could be adjusted to help with rendering a rear-view mirror in a car racing game or a CCTV camera display.

Viewport {

id: mainViewport

rect: Qt.rect(0, 0, 1, 1)

property alias Camera: cameraSelectorTopLeftViewport.camera

property alias Camera: cameraSelectorTopRightViewport.camera

property alias Camera: cameraSelectorBottomLeftViewport.camera

property alias Camera: cameraSelectorBottomRightViewport.camera

ClearBuffer {

buffers: ClearBuffer.ColorDepthBuffer

}

Viewport {

id: topLeftViewport

rect: Qt.rect(0, 0, 0.5, 0.5)

CameraSelector { id: cameraSelectorTopLeftViewport }

}

Viewport {

id: topRightViewport

rect: Qt.rect(0.5, 0, 0.5, 0.5)

CameraSelector { id: cameraSelectorTopRightViewport }

}

Viewport {

id: bottomLeftViewport

rect: Qt.rect(0, 0.5, 0.5, 0.5)

CameraSelector { id: cameraSelectorBottomLeftViewport }

}

Viewport {

id: bottomRightViewport

rect: Qt.rect(0.5, 0.5, 0.5, 0.5)

CameraSelector { id: cameraSelectorBottomRightViewport }

}

}

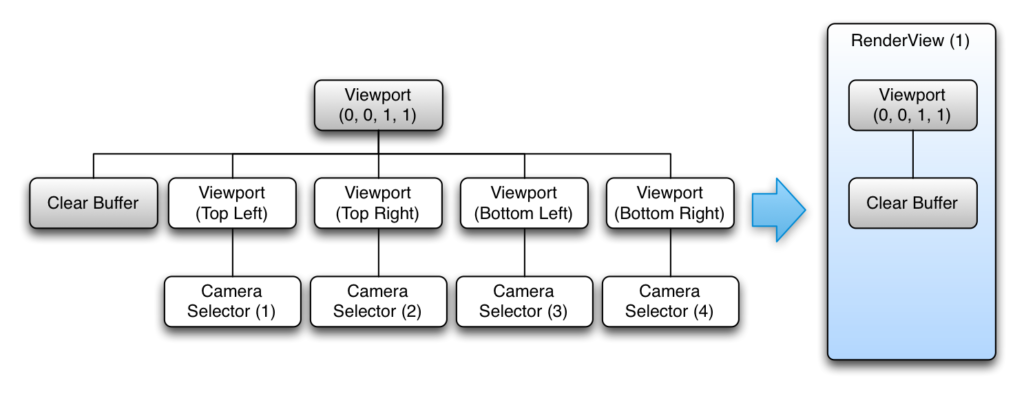

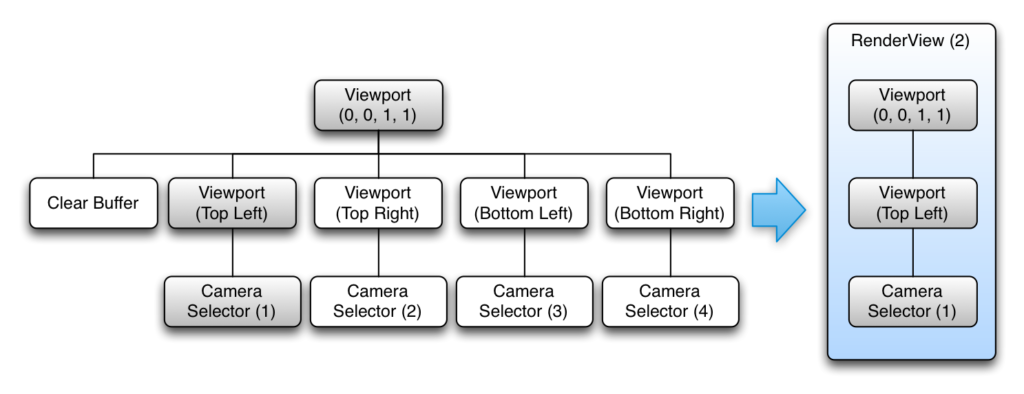

This tree is a bit more complex with 5 leaves. Following the same rules as before we construct 5 RenderView objects from the FrameGraph. The following diagrams show the construction for the first two RenderViews. The remaining RenderViews are very similar to the second diagram just with the other sub-trees

In full, the RenderViews created are:

- RenderView (1)

- Fullscreen viewport defined

- Color and Depth buffers are set to be cleared

- RenderView (2)

- Fullscreen viewport defined

- Sub viewport defined (rendering viewport will be scaled relative to its parent)

- CameraSelector specified

- RenderView (3)

- Fullscreen viewport defined

- Sub viewport defined (rendering viewport will be scaled relative to its parent)

- CameraSelector specified

- RenderView (4)

- Fullscreen viewport defined

- Sub viewport defined (rendering viewport will be scaled relative to its parent)

- CameraSelector specified

- RenderView (5)

- Fullscreen viewport defined

- Sub viewport defined (rendering viewport will be scaled relative to its parent)

- CameraSelector specified

However, in this case the order is important. If the ClearBuffer node were to be the last instead of the first, this would results in a black screen for the simple reason that everything would be cleared right after having been so carefully rendered. For a similar reason, it couldn’t be used as the root of the FrameGraph as that would result in a call to clear the whole screen for each of our viewports.

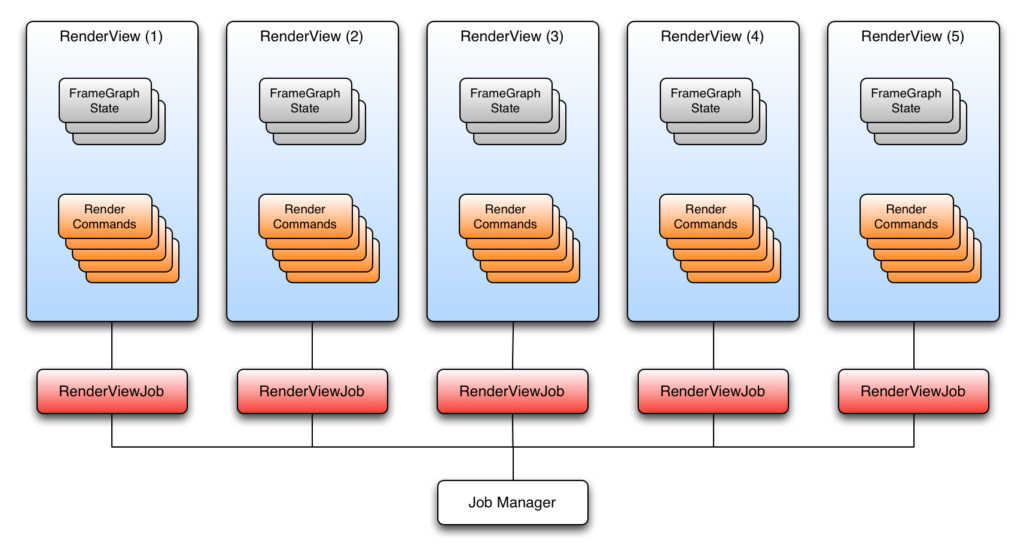

Qt3D uses a task-based approach to parallelism which naturally scales up with the number of available cores. This is shown in the following diagram for the previous example.

The RenderCommands for the RenderViews can be generated in parallel across many cores, and as long as we take care to submit the RenderViews in the correct order on the dedicated OpenGL submission thread, the resulting scene will be rendered correctly.

Deferred Renderer

When it comes to rendering, deferred rendering is a whole different beast in terms of renderer configuration compared to forward rendering. Instead of drawing each mesh and applying a shader effect to colorize it, deferred rendering adopts a two render pass method.

First all the meshes in the scene are drawn using the same shader that will output, usually for each fragment, at least four values:

- normal vector

- color

- depth

- position

Each of these values will be stored in a texture. The normal, color, depth and position textures form what is called the G-Buffer. Nothing is drawn onscreen during the first pass, but rather drawn into the G-Buffer ready for later use.

Once all the meshes have been drawn, the G-Buffer is filled with all the meshes that can currently be seen by the camera. The second render pass is then used to render the scene to the back buffer with the final color shading by reading the normal, color and position values from the G-buffr textures, and outputting a color onto a full screen quad.

The advantage of that technique is that the heavy computing power required for complex effects is only used during the second pass only on the elements that are actually being seen by the camera. The first pass doesn’t cost much processing power as every mesh is being drawn with a simple shader. Deferred rendering, therefore, decouples shading and lighting from the number of objects in a scene and instead couples it to the resolution of the screen (and G-Buffer). This is a technique that has been used in many games in the recent years and although it has some issues (handling transparency, high GPU memory bandwidth and requires multiple render targets), it remains a popular choice.

Viewport {

rect: Qt.rect(0.0, 0.0, 1.0, 1.0)

property alias gBuffer: gBufferTargetSelector.target

property alias camera: sceneCameraSelector.camera

LayerFilter {

layers: "scene"

RenderTargetSelector {

id: gBufferTargetSelector

ClearBuffer {

buffers: ClearBuffer.ColorDepthBuffer

RenderPassFilter {

id: geometryPass

includes: Annotation { name: "pass"; value: "geometry" }

CameraSelector {

id: sceneCameraSelector

}

}

}

}

}

LayerFilter {

layers: "screenQuad"

ClearBuffer {

buffers: ClearBuffer.ColorDepthBuffer

RenderPassFilter {

id: finalPass

includes: Annotation { name: "pass"; value: "final" }

}

}

}

}

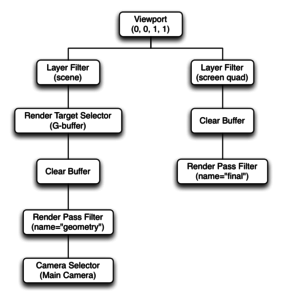

Graphically, the resulting Framegraph looks like

And the resulting RenderViews are:

- RenderView (1)

- defines a viewport that fills the whole screen

- select all Entities that have a Layer component matching “scene”

- Set the gBuffer as the active render target

- clear the color and depth on the currently bound render target (the gBuffer)

- select only Entities in the scene that have a Material and Technique matching the annotations in the RenderPassFilter

- specify which camera should be used

- RenderView (2)

- defines a viewport that fills the whole screen

- select all Entities that have a Layer component matching “screenQuad”

- clear the color and depth buffers on the currently bound framebuffer (the screen)

- select only Entities in the scene that have a Material and Technique matching the annotations in the RenderPassFilter

Other benefits of the Framegraph

Since the FrameGraph tree is entirely data-driven and can be modified dynamically at runtime, you can:

- Have different Framegraph trees for different platforms and hardware and select the most appropriate at runtime

- Easily add and enable visual debugging in a scene

- Use different FrameGraph trees depending on the nature of what you need to render for a particular region of the scene

- Also by providing a powerful tool such as FrameGraph, anyone is able to implement a new rendering technique without having to modify Qt3D’s internals.

Conclusion

In this post, we have introduced the FrameGraph and the node types that compose it. We then went on to discuss a few examples to illustrate the Framegraph building rules and how the Qt3D engine uses the Framegraph behind the scenes. By now you should have a pretty good overview of the FrameGraph and how it can be used (perhaps to add an early z-fill pass to a forward renderer). Also you should always keep in mind that the FrameGraph is a tool for you to use so that you are not tied down to the provided renderer and materials that Qt3D provides out of the box.

As always, please do not hesitate to ask questions.

About KDAB

KDAB is a consulting company offering a wide variety of expert services in Qt, C++ and 3D/OpenGL and providing training courses in:

KDAB believes that it is critical for our business to contribute to the Qt framework and C++ thinking, to keep pushing these technologies forward to ensure they remain competitive.

Can you give us a relatively detailed description on “LayerFilter” type and its usages?

On one side you have your scene tree composed of Entities which aggregate Components. There is a Layer Component on which you can set names. Different Entities can have different Layer Components but just setting Layer Components on Entities is meaningless if you do not configure your FrameGraph tree to filter out layers. That’s where the LayerFilter FrameGraph node comes in. It has a layers property. When the layers property matches one or more Layer names, that means that the rendering will only apply on the Entities that were positively filtered. Entities that didn’t have a Layer component or whose Layer’s names didn’t match the LayerFilter are simply ignored.

This is really useful if you want to render only some Entities given some conditions such as a debug mode where you would be drawing bounding boxes for example. Another use case is when doing deferred rendering. First you select all the Entities that needs to be renderer into the GBuffer, then in a second pass, you only draw a simple quad on which you apply the GBuffer textures.

You can see a real life use of layers filtering in the deferred-renderer example https://bit.ly/2uHck0N

The “ForwardRenderer” type is not present on “FrameGraph Nodes” table.

That is because the ForwardRenderer is not a FrameGraph Node type in itself. It is a default FrameGraph tree implementation for a forward renderer provided as a convenience for users. Here you can see what the ForwardRenderer expands to https://bit.ly/2uKMAAr

What is the frame rate of Qt3D? How is the frequency of buffer swapping defined?

Right now the Qt3D framerate is tied to the v-sync refresh rate, usually 60 fps

The bit.ly links in your replies are broken… can these be updated?

Done